Video Dataset of Human Demonstrations of Folding Clothing For Robotic Folding

Andreas Verleysen, Matthijs Biondina and Francis wyffels

IDLab-AIRO, Ghent University-imec

Radbout University, Netherlands

Introduction

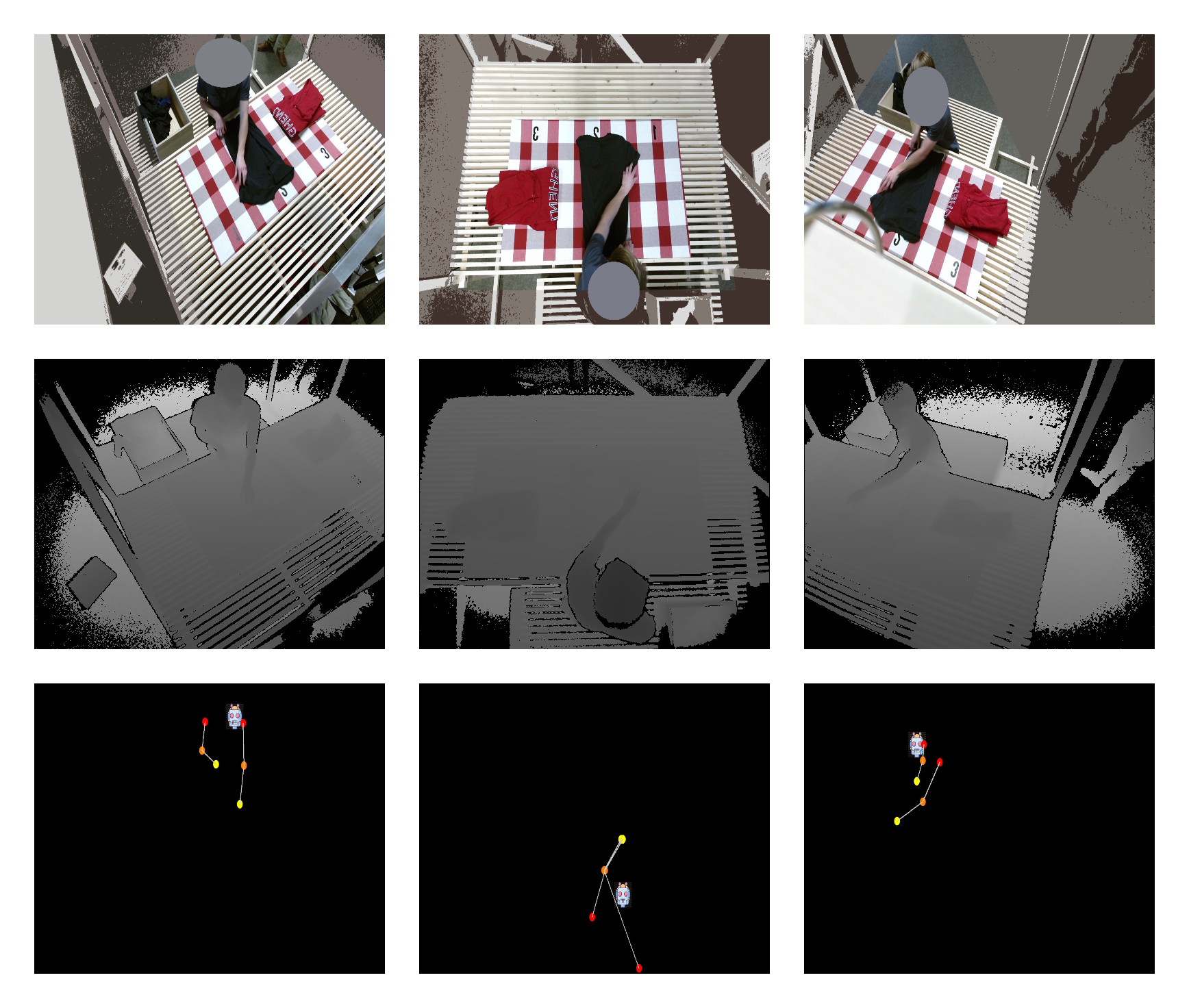

General-purpose cloth folding robots do not yet exist due to the deformable nature of textile, making it hard to engineer manipulation pipelines or learn this task. In order to accelerate research for the learning of the robotic folding task, we introduce a video dataset of human folding demonstrations. In total, we provide 8.5 hours of demonstrations from multiple perspectives leading to 1.000 folding samples of different types of textiles. The demonstrations are recorded on multiple public places, in different conditions with random people. Our dataset consists of anonymized RGB images, depth frames, skeleton keypoint trajectories, and object labels.

Features

The folding demonstrations in this dataset are exposed using the following features:

- RGB frames

- Depth values

- Skeleton keypoint trajectories

- Subtask labeling

- Descriptive labels such as folding method and type of textile being folded

Downloads

The following downloads are available:

Directory hierarchy

The samples are split and organized per folding demonstration of one piece of textile.

![]()